The AI engineering intelligence platform

Measure AI impact. Track efficiency across every tool. Enforce AI governance. iftrue is the one platform that turns Copilot, Cursor, and Claude Code usage into provable business outcomes.

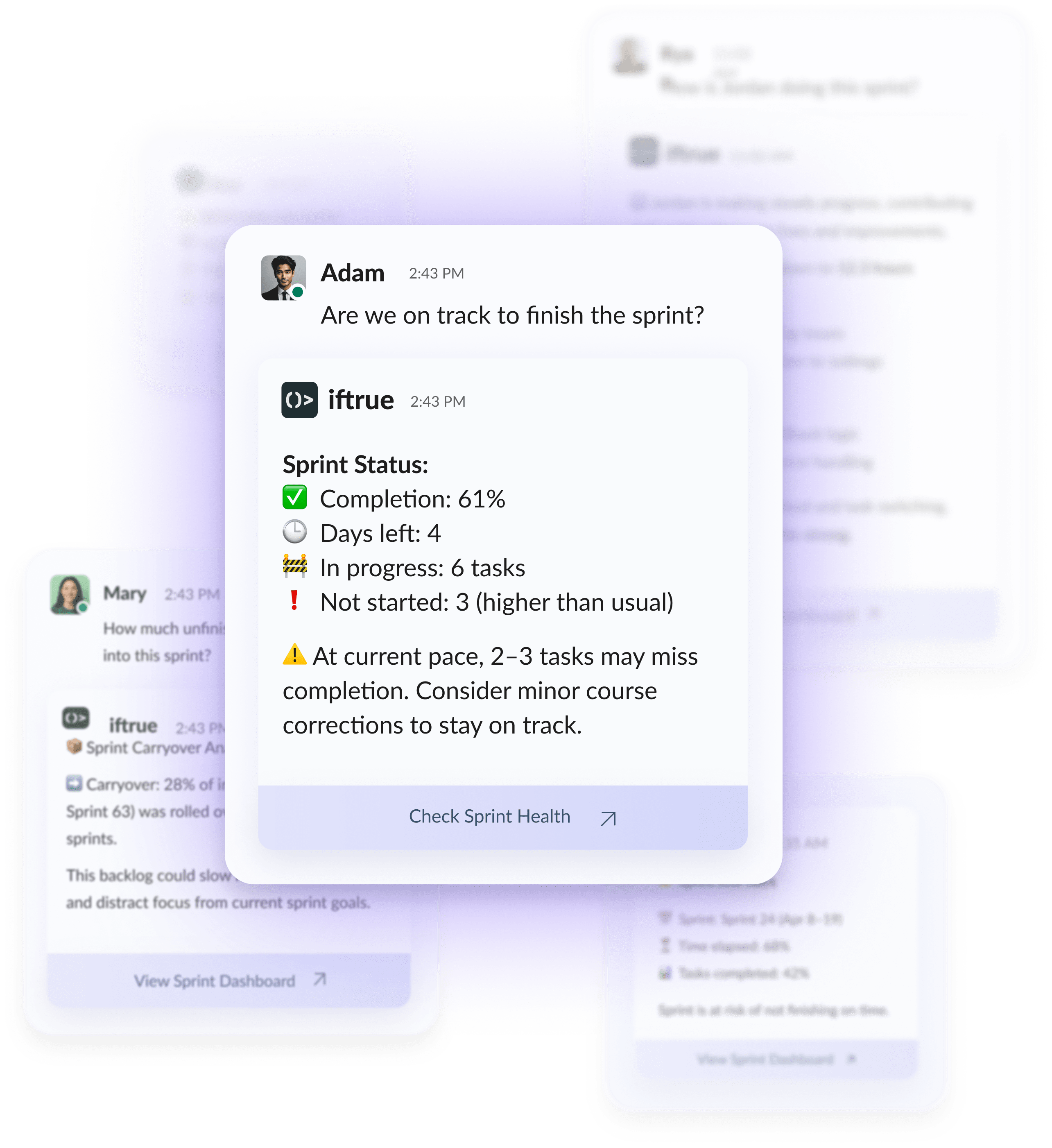

iftrue is the AI engineering intelligence platform for measuring AI impact, tracking AI efficiency, and enforcing AI governance across every tool your developers use. It unifies signals from GitHub Copilot, Cursor, Claude Code, and other AI coding assistants into a single dashboard, so CTOs and VPs of Engineering can prove ROI, scale adoption, spot rework, and stay compliant with the EU AI Act.

The three pillars

Everything engineering leaders need to measure, scale, and govern AI

- AI Impact Measurement

-

Prove the ROI of every AI coding tool. Track acceptance rate, rework, cycle time, and cost across Copilot, Cursor, and Claude Code, with code-level precision.

- AI Efficiency Analytics

-

Go beyond adoption metrics. See which teams turn AI suggestions into real velocity, where rework is eating your gains, and how to coach developers to get more from every AI tool.

- AI Governance

-

Set policies. Enforce them where developers work. Ship AIBOM reports, prepare for EU AI Act Phase 3 (August 2026), and align your AI coding stack with OWASP's agentic top 10.

Works with every AI coding tool your team uses

Also supports Sourcegraph Cody, Tabnine, Amazon Q Developer, and JetBrains AI. Single dashboard. Tool-agnostic ingestion. New tools added within 30 days of enterprise launch.

The AI-era engineering intelligence platform

Adoption signals

See who's actually using AI. Active users per tool, seat utilization, feature-level usage (chat vs autocomplete vs agent mode). Find teams ready to scale and teams stuck in autocomplete mode.

Efficiency metrics

Go deeper than acceptance rate. iftrue correlates AI suggestions with downstream outcomes like time-to-merge, revision count, and merge success rate to calculate a composite efficiency score for every developer, team, and AI tool.

Quality and rework

Spot the silent cost of AI. Track rework rate on AI-generated code, bug density, test coverage delta, and post-merge regression rates. When AI is creating more work than it saves, you'll see it first.

Outcome metrics

Connect AI usage to DORA. Cycle time, lead time, deployment frequency, and change failure rate, segmented by AI-assisted vs human-authored changes. Prove, team-by-team, whether AI moves the business.

Built for the people measuring AI at your company

- CTOs and VPs of Engineering

- Defend AI spend at budget reviews, decide which tools to standardize on, and report AI ROI to the board with data, not vibes.

- Platform and DevEx Leads

- Spot low-adoption teams, design enablement programs, and run structured experiments to figure out which AI tools actually move the needle.

- Engineering Managers

- Coach individuals. Spot developers shipping high-rework AI code vs those turning AI into real velocity. Protect team health with data-backed 1:1s.

30 minutes from install to first insight

- 1

Connect Git.

GitHub, GitLab, or Bitbucket. Read-only.

- 2

Connect your AI tool admin APIs.

Copilot, Cursor, Claude Code, whatever your team uses.

- 3

See your first AI impact report the same day.

No codebase access. No disruption to developers.

Enterprise-ready: GDPR-compliant, self-hosted deployment available for regulated industries.

How a global bank calibrated AI tool spend with per-team data

“Before iftrue we had no way to tell if AI tools were actually making us faster. Now we see AI code ratio, churn, and prompt-to-merge per team, and we sponsor the tools that prove their worth.”

Frequently asked questions

What is AI impact measurement?

AI impact measurement is the practice of quantifying how AI coding tools like Copilot, Cursor, and Claude Code affect software delivery outcomes. It spans adoption, efficiency, quality, and cost, and connects AI usage to business results like cycle time and change failure rate.

Does iftrue require access to our source code?

No. iftrue measures AI impact through metadata: PR events, commit metadata, tool admin APIs, and Git logs. We never ingest your codebase.

Which AI coding tools does iftrue support?

GitHub Copilot, Cursor, Claude Code, Sourcegraph Cody, Tabnine, Windsurf, Amazon Q Developer, and JetBrains AI. Custom LLM pipelines are supported via webhook. New enterprise tools are added within 30 days of launch.

What's a healthy AI acceptance rate?

Industry benchmarks put healthy acceptance rate at 25–35% for autocomplete and 40–60% for chat-based suggestions. But raw acceptance is a weak signal on its own. iftrue correlates it with rework rate and merge success to give you a composite efficiency score.

How is iftrue different from DX, Jellyfish, or Swarmia?

DX, Jellyfish, and Swarmia do excellent work on traditional DORA metrics and developer experience surveys. iftrue adds the AI-specific measurement layer on top: multi-tool adoption, acceptance/rework analysis, and AI-attributed outcome changes. Many teams run iftrue alongside these tools.

Can iftrue help with AI governance and the EU AI Act?

Yes. iftrue ships policy enforcement and AIBOM (AI bill of materials) reporting that maps to EU AI Act Phase 3 requirements (enforcement begins August 2026). Policy violations surface in IDE-level diagnostics and in developer dashboards.

How long does implementation take?

Typical setup is 30 minutes for Git and one AI tool. Full multi-tool deployment and baseline data collection takes 7–14 days. Useful insights appear in the first weekly review.

Is iftrue secure and compliant?

GDPR-compliant. Self-hosted deployment is available for financial services, healthcare, and government customers. iftrue never ingests source code.

See your AI impact in 30 minutes

Connect GitHub and one AI coding tool. Get your first impact report the same day. No credit card, no codebase access, no developer disruption.